How a Microprocessor Works: Fetch-Decode-Execute Deep Dive

TL;DR: A microprocessor repeats one loop forever: fetch the next instruction from memory using the program counter, decode it into control signals, then execute it on the ALU and registers. Every modern feature — pipelining, branch prediction, caches — is an optimization of those three steps. We trace the loop bit-by-bit, microoperation by microoperation, then layer on the architectural ideas that turn a single-cycle CPU into something a billion times faster.

Microprocessors look like magic from the outside. A square of silicon takes in a stream of bytes and produces video games, spreadsheets, web servers, and self-driving cars. Inside, though, it is doing one thing: reading numbers from memory, interpreting each number as an instruction, and acting on it. That single behavior — the fetch-decode-execute cycle — is the heartbeat of every CPU ever built, from the Intel 4004 to the chip in the device you are reading this on.

This is the deep dive. We will cover the von Neumann architecture and how it differs from the Harvard model, the buses that connect the CPU to memory, the exact microoperations that make up a fetch, how the control unit transforms an opcode into wires that go HIGH and LOW, what the ALU does during execute, how branches re-aim the program counter, the memory hierarchy that makes any of this fast, and finally a preview of pipelining, RISC vs CISC, and the modern extensions that push a single instruction stream into superscalar territory. If you have already read our case study on visualizing the fetch-decode-execute cycle, this post extends that walkthrough into a full architectural reference. If not, start there for a gentler introduction and return here for the textbook treatment.

What Is the Von Neumann Architecture, and Why Does It Win?

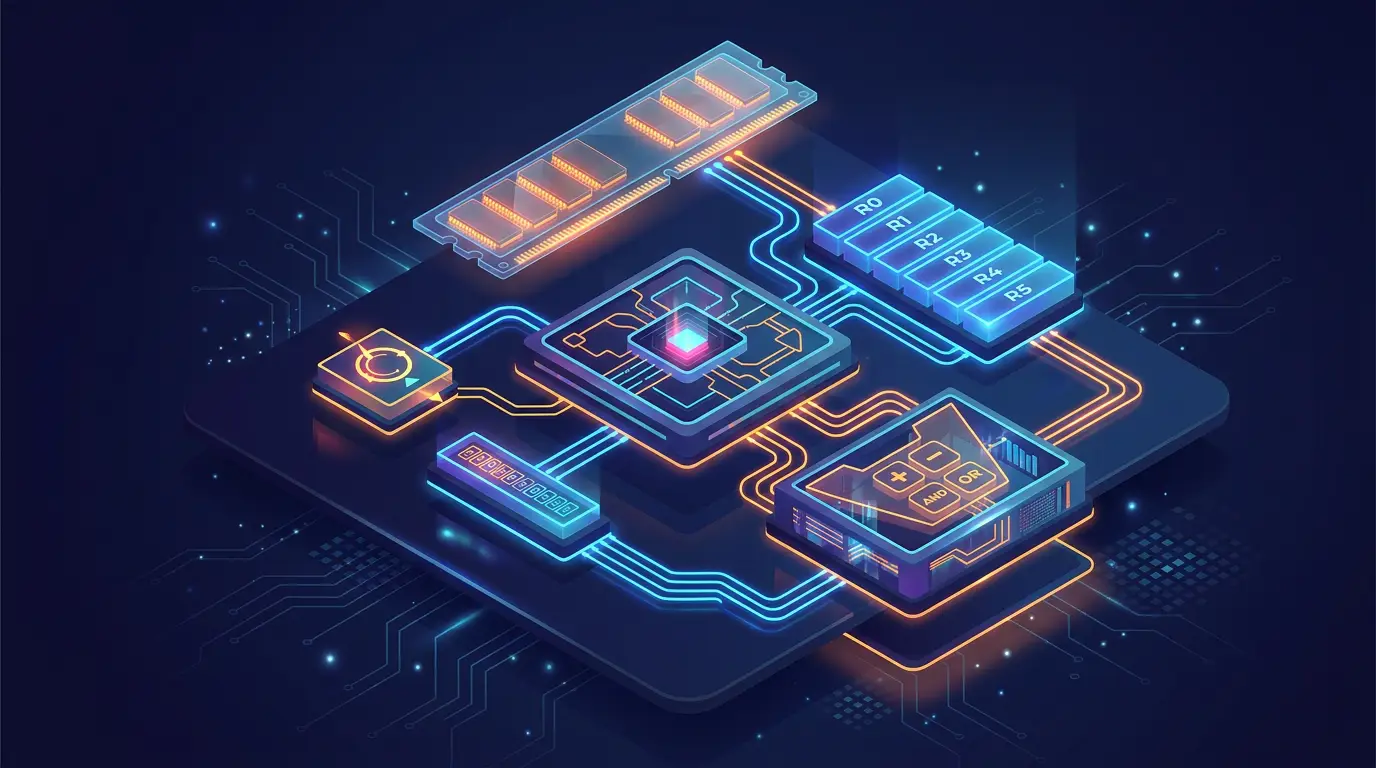

The defining diagram of computer architecture is a sketch John von Neumann published in 1945. It shows a single memory connected to a CPU by a single bus, with the CPU split into a control unit and an arithmetic-logic unit. That picture, called the von Neumann architecture, is what almost every general-purpose processor still implements eighty years later.

+-----------------------------+

| MEMORY |

| (instructions + data |

| share one address space) |

+--------------+--------------+

|

Address / Data / Control

|

+--------------+--------------+

| CPU |

| +------------------------+ |

| | Control Unit (CU) | |

| +------------------------+ |

| | Arithmetic-Logic Unit | |

| +------------------------+ |

| | Registers / PC / IR | |

| +------------------------+ |

+-----------------------------+

|

Input / Output devicesThe radical move was treating instructions as data. Earlier machines like the ENIAC were rewired by hand to run a new program. Von Neumann said: put the program inside the same memory as the operands, and the machine becomes universal — it runs whatever program you load, with no rewiring. That stored-program idea is why a phone, a server, and a microwave can all use the same instruction set.

Von Neumann vs Harvard

A small but important sibling is the Harvard architecture, which uses separate memories (and separate buses) for instructions and data. DSPs, microcontrollers like the AVR, and the L1 cache layer of nearly every modern CPU are Harvard-style.

| Property | Von Neumann | Harvard |

|---|---|---|

| Instruction & data memory | Same | Separate |

| Buses | One shared | Two independent |

| Bottleneck | Cannot fetch instr + data simultaneously | Both fetched in parallel |

| Self-modifying code | Trivial | Awkward (different memory space) |

| Typical use | General-purpose CPUs | DSPs, microcontrollers, L1 cache |

In practice, every modern desktop CPU is a hybrid: von Neumann at the main-memory level (one address space, one DRAM), Harvard at the L1 level (split I-cache and D-cache so fetch and load can happen the same cycle). This mirrors a theme we will see again: real machines are layered approximations of clean textbook models.

What Are the Buses Between the CPU and Memory?

Before we trace an instruction, we need vocabulary for the wires. The CPU and memory talk over three logical buses.

| Bus | Direction | Width (typical 8-bit / 32-bit / 64-bit) | Purpose |

|---|---|---|---|

| Address bus | CPU memory | 16 / 32 / 48 bits | Which byte to access |

| Data bus | Bidirectional | 8 / 32 / 64 bits | The byte being transferred |

| Control bus | Mixed | a handful of lines | RD, WR, MEM_REQ, READY, INT, RESET |

The address bus width sets the maximum addressable memory: a 32-bit address bus reaches bytes, which is exactly why 32-bit operating systems hit a 4 GB ceiling. The data bus width sets how many bytes the CPU can move per cycle. The control bus is the thinnest but most subtle: it carries the timing and direction signals that turn raw wires into a coherent transaction.

A typical read transaction:

T0: CPU drives ADDRESS lines, asserts MEM_REQ + RD

T1: Memory decodes address, places byte on DATA lines, asserts READY

T2: CPU latches DATA into a register, deasserts MEM_REQA write looks the same except the CPU drives the data lines and asserts WR instead of RD. The CPU’s own internal storage — its registers — does not use this bus. Inside the chip, transfers happen at clock-cycle speeds across short wires, while memory transactions happen across centimeters of trace at much slower speeds. We will return to this latency gap when we discuss the memory hierarchy.

If buses feel abstract, build a tiny one yourself. Open the RAM with address control template and watch what happens to the address lines as the controller steps through addresses. Then pair that with the basic RAM memory system to see read and write timing. Memory primitives are documented in detail under the RAM component reference and the ROM component reference.

How Does the Fetch Phase Work, Microoperation by Microoperation?

Fetch is the act of pulling the next instruction word from memory into the CPU. It seems trivial — IR = MEM[PC]; PC = PC + 1 — but a synchronous digital design has to break that one C statement into a sequence of register-transfer microoperations, each of which fits in a single clock cycle. (For the rest of this section we treat memory as word-addressed at the instruction level: every PC value indexes one full instruction word, and PC + 1 advances by one word. Real ISAs vary — RISC-V is byte-addressed with 32-bit instructions and so increments by 4 — but the microoperation structure is the same.)

Microoperations are written in register-transfer language (RTL). A typical fetch on a simple CPU looks like this:

T0: MAR <- PC ; copy PC to memory-address register

T1: MDR <- MEM[MAR], PC <- PC + 1 ; start memory read, increment PC

T2: IR <- MDR ; latch the instructionThree clock cycles, three transfers, no overlap. The reason fetch must be split this way is that each transfer uses different parts of the datapath, and we need the bus tri-stated and stable before the latching edge of the clock arrives.

A few details that matter:

- Why MAR exists. The CPU could in principle drive PC directly onto the address bus. In practice, you want a dedicated buffer (the MAR) so the program counter is free to change while the memory access is in flight. Most modern designs hide the MAR but the role is still there.

- Why PC increments during T1. The increment uses an adder that is independent of the ALU. Doing the increment in parallel with the memory read means we do not waste a cycle on it. This is the simplest example of instruction-level parallelism.

- How the IR latches. The IR is just a register — usually a bank of edge-triggered D flip-flops. Its load enable is asserted at T2 by the control unit, and on the rising edge it captures whatever is on its data input.

You can watch every one of these microoperations happen in the sequential instruction executor template. Pause it between cycles, hover over MAR and MDR, and you will see exactly which value is being moved where. For a curriculum-level walkthrough of the Program Counter itself, see our deep dive on program counters and the program counter component.

Fetch as a state machine

Conceptually, fetch is a tiny state machine. The control unit has a 2-bit timing counter that cycles T0 T1 T2 T0 \dots, and at each timing state it asserts a different set of register-transfer enables. That counter is built from J-K flip-flops or T flip-flops and is driven by the same clock that everything else uses — what we have called elsewhere the computer’s heartbeat.

How Does the Decode Phase Generate Control Signals?

Once the instruction word is sitting in the IR, the CPU has 16 bits (or 32, or 64) of binary that means something. The job of decode is to turn that meaning into a set of physical control signals — wires that go HIGH or LOW to route data through the right registers and the right ALU operation.

Instruction format

Almost every instruction set splits the bits into fields. A register-mode instruction in our example CPU uses this layout:

| 15 14 13 12 | 11 10 9 8 | 7 6 5 4 | 3 2 1 0 |

| OPCODE | REG_A | REG_B | REG_D |- Opcode (4 bits): which operation. With 4 bits we get 16 distinct instructions, which is enough for ADD, SUB, AND, OR, XOR, LD, ST, JMP, BEQ, BNE, NOP, MOV, INC, DEC, NOT, HLT.

- REG_A, REG_B: source register indexes (one bit per register, so 4 bits = 16 registers).

- REG_D: destination register index.

Immediate-mode formats sacrifice one register field for an immediate value, e.g.

| 15 14 13 12 | 11 10 9 8 | 7 6 5 4 3 2 1 0 |

| OPCODE | REG_D | IMMEDIATE |Opcode extraction

Decode physically splits the IR into its fields. The OPCODE bits feed into a 4-to-16 decoder; each output line corresponds to one possible instruction and is HIGH only when that instruction is in flight. We have a full post on decoders and encoders and a component reference for the decoder primitive.

Once the decoder has fired, the rest of the control unit is just combinational logic — a giant truth table that maps (opcode, timing_state, flag_bits) to a vector of microoperation enables.

| Opcode | Mnemonic | T2 microop | T3 microop | T4 microop |

|---|---|---|---|---|

| 0001 | ADD | A_BUS <- REG[REG_A] | B_BUS <- REG[REG_B] | REG[REG_D] <- ALU_OUT(A+B) |

| 0010 | SUB | A_BUS <- REG[REG_A] | B_BUS <- REG[REG_B] | REG[REG_D] <- ALU_OUT(A-B) |

| 0011 | AND | A_BUS <- REG[REG_A] | B_BUS <- REG[REG_B] | REG[REG_D] <- ALU_OUT(A&B) |

| 1010 | JMP | PC <- IMMEDIATE | - | - |

| 1011 | BEQ | (if Z=1) PC <- IMMEDIATE | - | - |

That table is implemented in two ways depending on the design philosophy:

- Hardwired control. A combinational network of AND/OR gates synthesizes each enable. This is fast and is how RISC chips do it. You can build hardwired control with AND gates, OR gates, and friends from our logic gate truth tables reference.

- Microprogrammed control. Each instruction maps to an address in a small ROM (the control store) that contains the microoperation vectors for every cycle. CISC chips like the original x86 used this because complex instructions need many cycles. The ROM lookup is just another memory access — see the ROM component reference.

The control unit is documented as a primitive in the control unit reference. For the deeper question of how a finite-state machine drives that control, our counters and state machines post is the prerequisite.

How Does the Execute Phase Actually Compute?

Execute is where work happens. Up to this point, the CPU has only moved bits around. Now it transforms them.

For a typical arithmetic instruction like ADD R3, R1, R2, execute does three things:

- Register read. The control unit asserts the read-enable on the register file at the addresses given by REG_A and REG_B. The register file is two-ported (two reads at the same time) and is built from arrays of 4-bit registers. The values flow onto the A_BUS and B_BUS.

- ALU operation. The ALU sees the two operands and the opcode-derived ALU function code. For ADD it routes them through the ripple-carry adder (or carry-lookahead in faster designs — see our post on carry-lookahead adders). For AND/OR/XOR it routes through the corresponding gate array. The ALU’s full design is treated in how an ALU works and as a primitive in the ALU 8-bit reference.

- Write-back and flag update. On the next clock edge, the ALU output is latched into REG_D. Simultaneously, the flag bits — Zero (Z), Carry (C), Sign (S), Overflow (V) — are computed from the ALU result and stored in the flags register. We have a dedicated post on CPU flags.

The same template that walks fetch and decode also walks execute: open the 4-bit ALU demonstration to see operands flow through, and the 8-bit ALU system for a more realistic datapath.

Why the execute phase is the variable one

Fetch and decode are roughly constant in cycle count. Execute is not. A register-register ADD finishes in one cycle. A LOAD instruction has to issue another memory access, which on a cache miss can be 200+ cycles. A branch is a single cycle but invalidates the entire pipeline if mispredicted. Most of the architectural complexity of a modern CPU is wrapped around hiding execute-phase variance.

How Do Conditional Branches Actually Re-aim the PC?

Branches are the only reason programs are not straight lines. Without them, every program would execute its instructions in order from the first to the last and then halt. Conditional branches let the CPU pick a different next instruction based on a flag.

The microoperation for BEQ target (branch if equal) is:

T2: if (Z == 1) PC <- IMMEDIATE

else (no-op; PC already incremented during fetch)That if is not software — it is a single AND gate. The control unit ANDs the BEQ-decode line with the Z flag from the flags register; the output is the load-enable on the PC. When both are HIGH, the PC latches a new value on the next clock edge and the next fetch reads from the branch target. When either is LOW, the load-enable stays LOW and the PC keeps the value it had after the post-fetch increment.

| Branch | Boolean condition | Mnemonic |

|---|---|---|

| BEQ | branch if equal | |

| BNE | branch if not equal | |

| BLT | branch if less than (signed) | |

| BGE | branch if greater or equal | |

| BCS | branch if carry set | |

| BVS | branch if overflow set |

These conditions are exactly what makes Boolean algebra physical: a De Morgan transformation on the condition logic can flip BLT into a “branch if NOT GE” without changing the silicon area. For a primer on flag arithmetic, our post on two’s complement shows where S and V come from.

A branch is also where the CPU’s predictive circuitry earns its keep. We will get to branch prediction in the modern-extensions section.

What Is the Memory Hierarchy, and Why Does It Have So Many Levels?

If memory were uniformly fast, the CPU would just talk to it directly. It is not. There is a four-orders-of-magnitude latency gap between an on-chip register and a spinning disk. Computer architects fill that gap with a stack of progressively larger, progressively slower storage layers.

| Layer | Capacity | Latency | Built from |

|---|---|---|---|

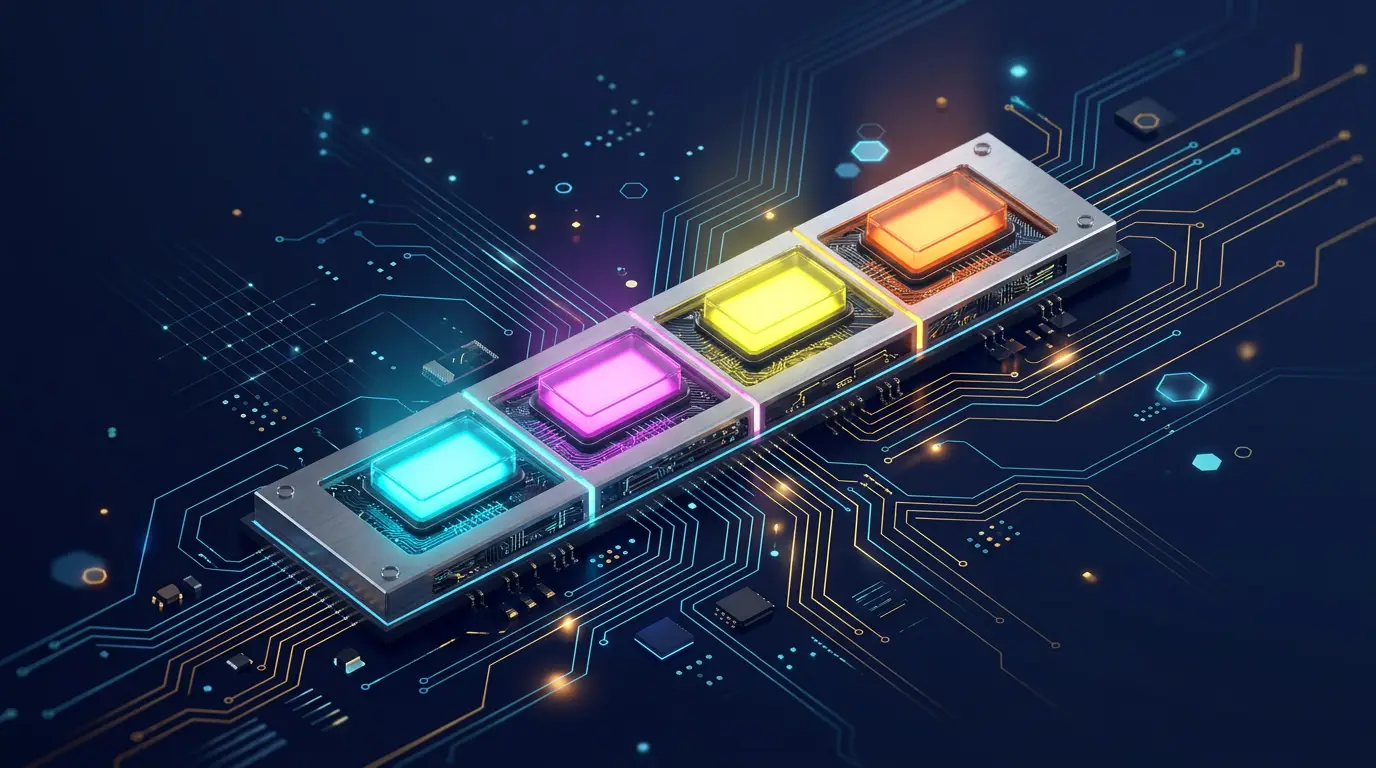

| Register | KB | cycle | Edge-triggered flip-flops |

| L1 cache | 32-64 KB | 4 cycles | SRAM (6-transistor cells) |

| L2 cache | 256 KB - 2 MB | 10-15 cycles | SRAM |

| L3 cache | 4-64 MB | 30-50 cycles | SRAM |

| DRAM (main) | 4-256 GB | 200-300 cycles | DRAM (1 transistor + capacitor) |

| NVMe SSD | 256 GB - 8 TB | cycles | Flash cells |

| Spinning disk | 1-20 TB | cycles | Magnetic platters |

A few rules of thumb fall out of that table:

- The closer to the CPU, the smaller and faster. Caches are physically next to the cores. Latency is dominated by how far electrons travel, so geometry sets a floor.

- Each level is roughly 10x bigger and 10x slower than the level above. This is no coincidence: caches are sized to maximize hit rate per square millimeter of silicon at each latency tier.

- Bandwidth is decoupled from latency. A DRAM access is slow to start but fast to stream once the row is open, which is why streaming workloads can saturate DRAM bandwidth even though their per-access latency is brutal.

For an interactive primer on the storage end of this stack, see RAM vs ROM and the endianness discussion of how multi-byte values sit in memory.

The memory hierarchy is the reason most modern CPU optimization is about not talking to DRAM. A single L3 miss costs hundreds of cycles during which the CPU could have retired hundreds of instructions. Avoiding misses — through prefetching, blocking, cache-aware data structures — is where most performance work happens.

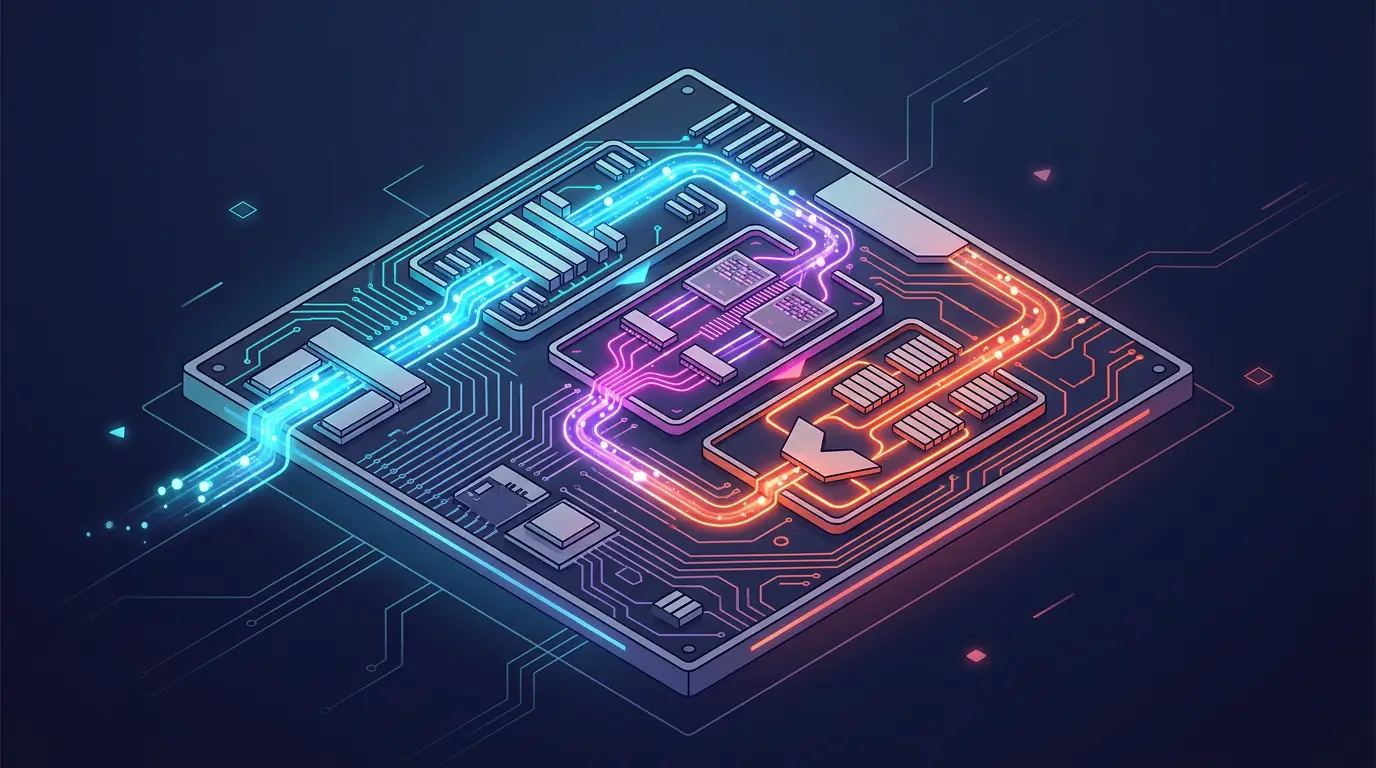

What Is Pipelining, and How Does It Make Things Faster?

Our textbook fetch-decode-execute machine is single-cycle: it runs one instruction at a time, taking N clock cycles per instruction. The clock has to be slow enough that the longest stage (typically execute on a memory load) finishes within one cycle. That is wasteful.

Pipelining notices that fetch, decode, and execute use different hardware. While the execute unit is busy with instruction , the decode unit could be working on instruction , and the fetch unit could be reading instruction . If we add register barriers between stages — pipeline registers — and let each stage run independently, we get throughput of one instruction per cycle even though each individual instruction still takes three cycles to traverse the pipeline.

Cycle: 1 2 3 4 5 6

Fetch: I1 I2 I3 I4 I5 I6

Decode: I1 I2 I3 I4 I5

Execute: I1 I2 I3 I4That is a 3x throughput win on paper. In practice you have to deal with hazards:

- Structural hazards. Two stages want the same hardware. Solved by duplicating it (e.g. separate adders for PC increment and ALU work).

- Data hazards. Instruction needs the result of instruction , which is still in the pipeline. Solved with forwarding (route the ALU output back to the input of the next ALU op) or by stalling.

- Control hazards. A branch decides the next PC at execute, but fetch has already pulled in two wrong instructions. Solved with branch prediction (and pipeline flush on misprediction).

Modern CPUs are pipelined ten to twenty stages deep. The deeper the pipeline, the higher the clock frequency you can sustain (each stage does less work, so each stage has a shorter critical path), but the higher the cost of a misprediction. The Pentium 4 famously pushed to 31 stages and was punished by branch-heavy code. For a deeper look at the analog physics behind pipeline depth, see propagation delay and setup, hold, and metastability.

RISC vs CISC: Two Schools of Decode

Once you accept that the control unit is the brain of the CPU, the natural next question is: how complex should an instruction be allowed to be? Two answers crystallized in the 1980s.

| Property | RISC (Reduced Instruction Set) | CISC (Complex Instruction Set) |

|---|---|---|

| Instruction count | ~50-200 | ~500-2000 |

| Instruction length | Fixed (e.g. 32 bits) | Variable (1-15 bytes) |

| Memory access | Only LD / ST | Most instructions can touch memory |

| Cycles per instruction | ~1 (with pipelining) | Variable, often many |

| Decode complexity | Trivial (parallel decode) | Hard (variable-length, micro-op fan-out) |

| Examples | ARM, RISC-V, MIPS, PowerPC | x86, x86-64, VAX, IBM System/360 |

The RISC argument is: simple instructions decode fast, pipeline cleanly, and let the compiler do the work of composing them. The CISC argument is: complex instructions encode more meaning per byte, which mattered when memory was expensive and compilers were weak.

Both arguments have aged interestingly. Modern x86 chips are CISC at the instruction-set level but RISC underneath: the front-end decodes x86 instructions into internal micro-ops that look very RISC-like, and the rest of the pipeline operates on those. ARM has gone the other way and added more complex instructions over time. The frontier is converging — but the teaching model is still cleaner with RISC, which is why almost every undergraduate textbook now uses MIPS or RISC-V.

What Modern Extensions Sit on Top of the Basic Pipeline?

The single-issue, in-order, five-stage pipeline is the canonical undergraduate CPU. Real chips are much more aggressive. We will not derive these in detail — each deserves its own post — but here is the layer cake.

Out-of-order execution

If instruction depends on but does not, why wait? Out-of-order CPUs maintain a reservation station of decoded instructions and dispatch them to execution units as soon as their operands are ready, regardless of program order. A reorder buffer commits results back in program order so the visible behavior matches the textbook. The earliest commercial out-of-order machines were the CDC 6600 (1964, scoreboard) and the IBM 360/91 (1967, Tomasulo’s algorithm). The technique returned to mainstream desktop CPUs with the Pentium Pro and is present in essentially every high-performance core since.

Superscalar issue

A superscalar CPU has multiple execution units (multiple ALUs, a load-store unit, a floating-point unit) and can issue multiple instructions per cycle. Modern Apple and AMD cores can sustain 6-8 instructions per cycle on well-tuned code. The decode width (how many instructions the front end can decode per cycle) is the limiting factor for many workloads.

Branch prediction

A pipeline that flushes on every branch loses its throughput advantage. Branch predictors guess the outcome of conditional branches before the result is known. Modern predictors are remarkably good — accuracy in the high 90s% for typical code — using two-level adaptive schemes, perceptron predictors, and TAGE (tagged geometric history). When they are right, the pipeline never stalls. When they are wrong, the misprediction penalty is the entire pipeline depth.

SIMD and vector extensions

Single-Instruction Multiple-Data adds wide registers (128, 256, 512 bits) and instructions that operate on lanes in parallel. AVX-512 can do sixteen 32-bit floating-point multiplies in one instruction. SIMD is why modern image processing, video codecs, and machine-learning kernels are so much faster than naive scalar code.

SMT (hyperthreading)

If a thread stalls on a cache miss, the execution units sit idle. Simultaneous multi-threading lets two (or more) threads share one core’s execution units, switching between them at the instruction-fetch level. The threads compete for cache, so SMT helps memory-bound workloads more than compute-bound ones.

Speculation

The same hardware that predicts branches also speculates around memory ordering, exceptions, and cache behavior. Speculation is the engine that powers modern CPU performance — and the source of side-channel vulnerabilities like Spectre and Meltdown, which exploit the cache footprint left behind by mispredicted speculative instructions.

How Do You Build All of This for Real (Even at Toy Scale)?

There is a tendency, when reading about CPUs, to skip from the textbook diagram to “and then there is a billion transistors”. The middle is where the learning lives. You can build a working 8-bit CPU in a digital simulator in a weekend. The principles do not change at scale; only the optimizations do.

A reasonable starter project sequence:

- Build a 4-bit ALU. Combine adders, logic gates, and a multiplexer to select the operation. Walk through it in how an ALU works and try the 4-bit ALU demonstration template.

- Build a register file. Four 4-bit registers wired to a multiplexer for read and a decoder for write.

- Build a memory. A small RAM with address control is enough.

- Build a program counter. A counter with a load enable, sized to your address bus. Documented as the program counter primitive.

- Build a control unit. Hardwired is easier; microprogrammed is more elegant. Either way, it is just combinational logic of

(opcode, timing_state). - Wire it all together. The sequential instruction executor template is exactly this — a small but real CPU you can step through one cycle at a time.

For a step-by-step build narrative, our companion post build a CPU from scratch in a simulator walks the wiring decisions in detail.

Putting the Whole Cycle Together

Let’s run the instruction ADD R3, R1, R2 on our textbook CPU, assuming R1 = 5 and R2 = 7, and the instruction is at memory address 0x10. We will list every microoperation across every clock cycle. This is the kind of trace that, when you can do it in your head, means you understand a CPU.

Initial state: PC = 0x10, R1 = 0x05, R2 = 0x07, R3 = 0x00, Z=C=S=V=0

Memory at 0x10: 0001 0001 0010 0011 (ADD R3, R1, R2 — destination first)

CYCLE 1 (T0, fetch):

MAR <- PC ; MAR = 0x10

ADDRESS_BUS <- MAR

RD <- 1, MEM_REQ <- 1

CYCLE 2 (T1, fetch):

MDR <- DATA_BUS ; MDR = 0x1123

PC <- PC + 1 ; PC = 0x11

CYCLE 3 (T2, fetch + start decode):

IR <- MDR ; IR = 0x1123

RD <- 0, MEM_REQ <- 0

(control unit decodes IR[15:12] = 0001 = ADD)

CYCLE 4 (T3, execute step 1):

A_BUS <- REG[IR[11:8]] ; A_BUS = R1 = 0x05

B_BUS <- REG[IR[7:4]] ; B_BUS = R2 = 0x07

CYCLE 5 (T4, execute step 2):

ALU_OUT <- A_BUS + B_BUS ; ALU_OUT = 0x0C

Z <- (ALU_OUT == 0) ; Z = 0

C <- carry_out ; C = 0

S <- ALU_OUT[MSB] ; S = 0

V <- overflow ; V = 0

CYCLE 6 (T5, write-back):

REG[IR[3:0]] <- ALU_OUT ; R3 = 0x0C

(go to CYCLE 1 for the next instruction)Six cycles, one instruction. A pipelined version of the same machine would have already started fetching instruction during cycle 4, instruction during cycle 5, and so on. A modern out-of-order superscalar CPU might have a dozen instructions in flight at once. But the meaning of each cycle is exactly what we just wrote down. Everything else is bookkeeping.

If you want to see those six cycles happen on a real (simulated) circuit, the sequential instruction executor template is calibrated to step exactly this way. Pause between cycles, watch the bus values change, and the abstract trace becomes a physical process. For a more guided walk-through, our case study post narrates it in plain English with screenshots.

Where the Microprocessor Story Goes Next

You now have the textbook. Beyond it sit topics each large enough to be a post on its own:

- Caches — set-associative mapping, replacement policy, write-through vs write-back.

- Virtual memory — page tables, TLBs, translation, protection.

- Exceptions and interrupts — the asynchronous side of the control unit.

- Multi-core coherence — MESI, snooping, ring buses, mesh interconnects.

- Power and clocking — voltage-frequency scaling, clock gating, clock skew.

- Security and side channels — the cost of speculation.

If you are designing your own CPU as a learning exercise, the build a CPU from scratch post is the natural next read. If you want to follow the instruction stream further into where data lives, RAM vs ROM and endianness are the next two lenses.

The fetch-decode-execute cycle is one of the most beautiful loops in engineering: short enough to fit on a napkin, expressive enough to run every program ever written. The closer you look at it, the more you find. Open the sequential instruction executor template, step through a single instruction, and the ideas in this post turn into a living circuit. That is the moment microprocessors stop being magic.

Build it next: Open the sequential instruction executor template in DigiSim and step through your first instruction. Then read build a CPU from scratch in a simulator to wire one yourself from scratch.