Carry-Lookahead Adder: Faster Than Ripple-Carry

TL;DR: A carry-lookahead adder computes every carry bit in parallel from per-bit generate () and propagate () signals, dropping the addition’s critical path from to at the cost of a wider, more complex carry network.

A 4-bit ripple-carry adder is straightforward: chain four full adders, route each into the next . The trouble starts when you ask how fast it goes. The most-significant bit cannot finalize its sum until the carry from the LSB has rippled through every intermediate stage, one full-adder delay per stage. For a 64-bit ALU, that’s on the critical path — completely unacceptable for a modern processor running at gigahertz clock rates.

Carry-lookahead adders (CLAs) attack the problem at its source: they compute every carry in parallel from a small set of intermediate signals derived directly from the inputs.

What Makes Ripple-Carry Slow?

Recall the full-adder equations:

The sum depends on . The carry also depends on . So the chain of dependencies is . If a single carry stage takes time, the worst-case total addition time is approximately:

Linear in word width. For 4-bit words this might be 8 nanoseconds, perfectly acceptable. For 32 or 64-bit words it dominates the cycle time of the entire processor. This is the kind of propagation-delay limit that has driven CPU architecture for fifty years.

Generate and Propagate

Look again at the carry equation:

The first term, , is true exactly when both operand bits are 1 — in which case stage unconditionally produces a carry-out, regardless of what arrives at . Define:

This is the generate signal — stage generates a carry on its own.

The second term, , says: if exactly one of the operand bits is 1, then whatever arrives at propagates straight through. Define:

This is the propagate signal — stage passes any incoming carry to its output.

The carry equation collapses to:

This is the AND gate and OR gate you already know, doing exactly the work their truth tables describe — but now combined into a recurrence that you can flatten.

Flattening the Carry Recurrence

Substitute the recurrence into itself. Starting from a known :

The pattern is clean. Each carry is a sum-of-products over the generate and propagate signals from stages through . The longest term in has five inputs ANDed together — but importantly, all four carries can be computed simultaneously because none of them depends on any of the others. They all depend only on , , and , which are derived directly from the inputs in two gate delays.

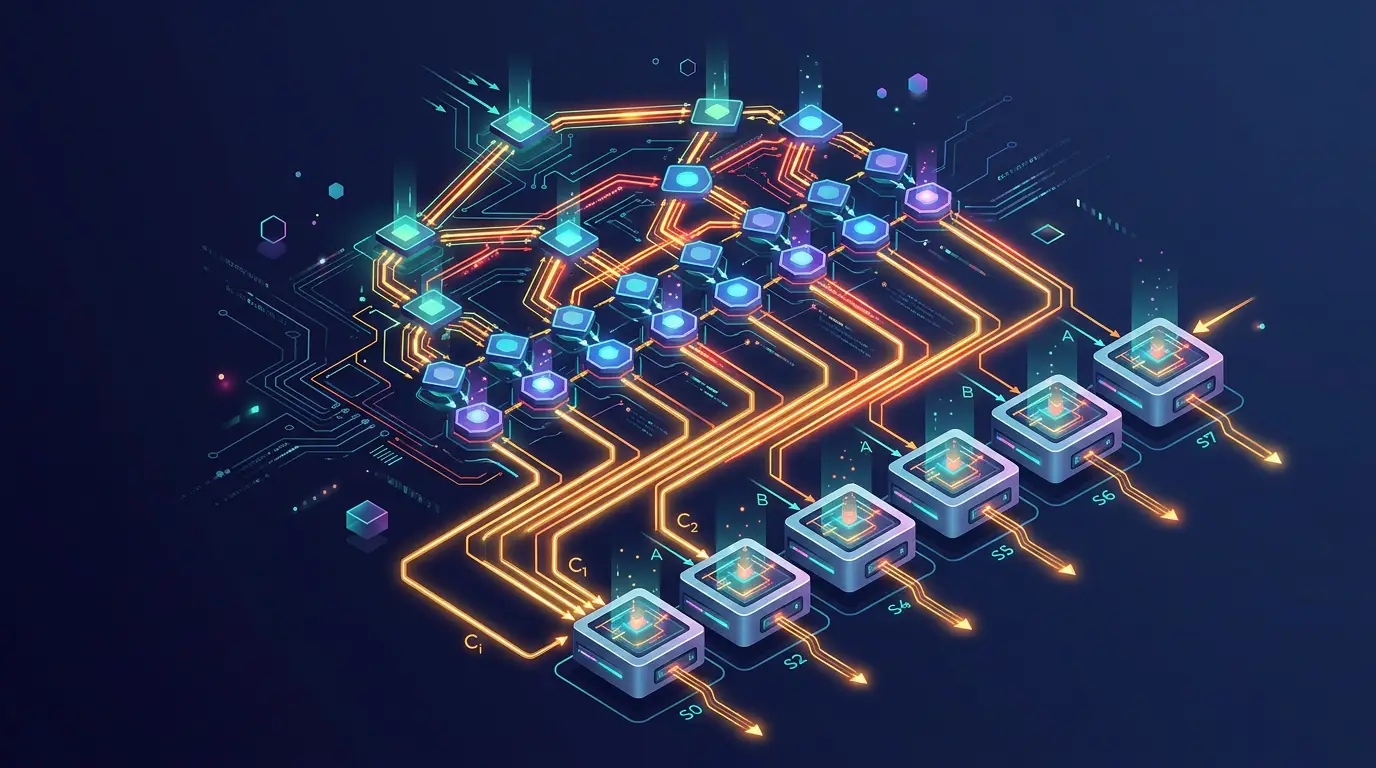

The 4-Bit CLA Architecture

Three logical layers:

- Generate/propagate layer. AND gates produce in one delay; XOR gates produce in one delay. These run in parallel.

- Carry network. A wide AND-OR tree computes from the formulas above. Two gate delays (one AND, one OR) regardless of how many bits.

- Sum layer. XOR gates produce in one delay. (Note that once is computed.)

Total critical path: 4 gate delays for any width up to the point where the carry network itself blows out. Compare with for ripple-carry. For a 16-bit adder, that’s roughly 4 versus 32 — an 8x speedup.

Block Diagram of a 4-Bit CLA

A0,B0 ─┬── XOR ── P0 ──┐ ┌── XOR ── S0

└── AND ── G0 ──┤ │

│ ┌─── carry network ───┐ │

A1,B1 ─┬── XOR ── P1 ──┤ │ │ ├── XOR ── S1

└── AND ── G1 ──┤ │ computes │ │

├──>│ C1, C2, C3, C4 │─┤

A2,B2 ─┬── XOR ── P2 ──┤ │ in 2 gate delays │ ├── XOR ── S2

└── AND ── G2 ──┤ │ │ │

│ └─────────────────────┘ │

A3,B3 ─┬── XOR ── P3 ──┤ ├── XOR ── S3

└── AND ── G3 ──┘ │

└────────── C4

C0 ──────────────────────>┘Gate Count: The Hidden Cost

The carry network grows quadratically in its fanin. For , you need a 5-input AND for the full propagation chain; for , a 17-input AND. Real silicon doesn’t have arbitrary-input AND gates — wide gates decompose into trees, adding delay. So a “flat” CLA scales only up to about 4 to 8 bits before you start paying for tree depth.

The standard fix is hierarchy. Group bits into 4-bit blocks, each block computes its own block-level generate and propagate signals, and a second-level CLA combines those. For a 16-bit adder you get four 4-bit CLA blocks plus one 4-bit lookahead unit on the block carries. Critical path: roughly levels of lookahead times a constant.

Comparison Table

| Design | 16-bit critical path | Gate count |

|---|---|---|

| Ripple-carry | ~32 gate delays | ~80 |

| Single-level 4-bit CLA blocks, ripple between blocks | ~16 gate delays | ~120 |

| Two-level 16-bit CLA | ~8 gate delays | ~200 |

| Kogge-Stone (full parallel-prefix) | ~5 gate delays | ~400 |

| Brent-Kung (sparse prefix) | ~7 gate delays | ~150 |

(Rough estimates — actual numbers depend on gate library and optimization.)

The takeaway: every halving of delay roughly doubles gate count. CPU designers pick the spot on the curve that matches their cycle-time budget. ARM cores at 1 GHz can use Brent-Kung; AMD/Intel x86 cores at 5 GHz use full Kogge-Stone or worse.

Beyond CLA: Parallel-Prefix Adders

The carry-lookahead idea generalizes. The recurrence is associative under a custom operator — you can pair up adjacent positions, then pairs of pairs, in a tree. This is the parallel-prefix family:

- Kogge-Stone — fastest, uses cells, each level fans out to many.

- Brent-Kung — half the gates of Kogge-Stone, one extra logic level.

- Han-Carlson, Ladner-Fischer, Sklansky — different trade-offs of fanout, wiring complexity, and depth.

Modern high-end ALUs use a hybrid — Kogge-Stone in the upper bits where speed dominates, Brent-Kung in the lower bits where area matters. The exact choice is a circuit-design specialty.

Implementing a CLA in DigiSim

You can build a 4-bit CLA from primitives — four AND gates for the generates, four XOR gates for the propagates, the carry tree from the equations above, and four XOR gates for the sums. The ADDER and ADDER_8BIT components in DigiSim use carry-lookahead internally to keep delay reasonable. To compare against the slow path, open the full-adder-with-carry template, chain four instances together as ripple-carry, and time the critical path against an equivalent CLA. The difference grows visibly as you push to 8 and 16 bits.

If you want to characterize the gate-delay scaling experimentally, the simulator’s logic analyzer can tag the moment and change versus the moment and stabilize. Run the experiment with both architectures across word widths and you’ll see the linear vs logarithmic scaling directly.

Common Pitfalls

- Forgetting that uses XOR, not OR. Either definition — or — produces a correct carry, because the case is already covered by . The reason to prefer XOR is that the same wire feeds the sum stage: . With OR-defined that reuse breaks down and you need a separate XOR for the sum.

- Underestimating fanin scaling. A “16-bit CLA” implies a 17-input AND somewhere unless you go hierarchical. Real designs always go hierarchical.

- Assuming CLA is always faster. For very narrow words (4 bits or fewer) the constants in CLA exceed ripple-carry’s. The crossover is around 6 to 8 bits.

- Mixing up sum and carry critical paths. The carry network’s depth dominates total delay, but the final sum XOR adds one more gate delay. Specifying delay as “carry-network depth” is a common error.

What’s Next

The next post in this series, How an ALU Works: Arithmetic Logic Unit From Gates, integrates the CLA into a full multi-function arithmetic unit alongside subtraction, AND, OR, and shift operations. Following that, Booth Multiplier Explained (With Examples) applies a similar speedup philosophy to multiplication — using a different algorithmic trick to skip past long runs of zeros and ones.

To compare the two adder architectures hands-on, open the full-adder-with-carry template, chain four instances as a ripple-carry adder, and watch the bit-by-bit carry propagation on the logic analyzer. Then build the equivalent 4-bit CLA from the equations in this post and time both circuits — the difference is the entire reason high-speed ALUs exist.