RAM vs ROM: The Essential Difference (with Circuits)

TL;DR: RAM is volatile read-write memory used as the CPU’s working scratchpad; ROM is non-volatile read-mostly memory used to hold firmware and constants. Both are arrays of single-bit cells selected by an address decoder driving a word line and read or written through a bit line — the cell technology is the only thing that really differs.

RAM and ROM look identical from outside the chip: an address bus goes in, a data bus comes out, a few control lines select read or write. The differences are in the cells inside — what they are made of, whether they remember without power, and whether you are allowed to write them. Those choices then ripple back out into how the device is used in a computer.

This post compares RAM and ROM directly, walks through the SRAM vs DRAM distinction, surveys ROM types from mask ROM to Flash, traces the address decoder + word-line + bit-line read path, and works through a 16-byte RAM example. Each part connects to a working circuit you can open in DigiSim.

Side-by-Side Comparison

The headline differences:

| Property | RAM | ROM |

|---|---|---|

| Volatility | Loses contents on power-off | Retains contents indefinitely |

| Write capability | Read and write at full speed | Read at speed; write slow or impossible |

| Typical purpose | Working memory, scratchpad, stack, heap | Firmware, boot code, lookup tables, constants |

| Speed (read) | Fast (SRAM 1 ns; DRAM 10–50 ns) | Comparable read speed; mask ROM is fast, Flash is slower |

| Density (cell size) | DRAM is densest among RAM; SRAM is bulky | Mask ROM and Flash are very dense |

| Cost per bit | DRAM is cheap; SRAM is expensive | Mask ROM is cheapest at scale; Flash mid-tier |

| Refresh required | DRAM yes; SRAM no | Never |

| Endurance (writes) | Unlimited | Mask ROM 0; EPROM ~1000; Flash ~100 000 |

| Where it sits | Main memory, cache, registers | BIOS/UEFI, microcontroller program memory, character ROMs |

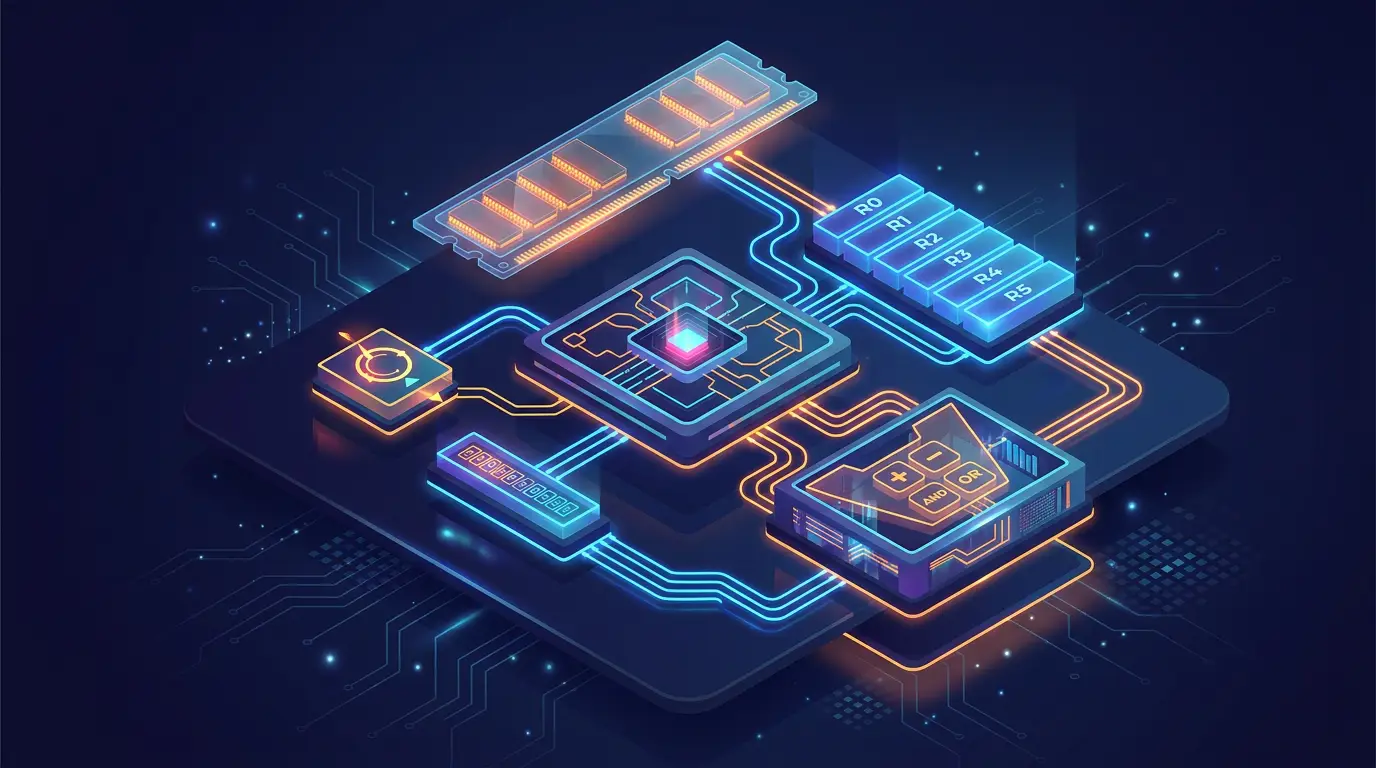

Both technologies appear inside the simulator: RAM for the working memory used by the CPU and ROM for fixed lookup tables and instruction storage in templates like the Sequential Instruction Executor.

SRAM vs DRAM

“RAM” is an umbrella over two very different technologies. The trade-off is cell complexity vs density.

SRAM — Static RAM

A single SRAM cell is six transistors in a cross-coupled inverter pair forming a bistable latch — exactly the structure described in The SR Latch, but built with MOSFETs and tuned for storage rather than control. Two access transistors connect the latch to the bit lines when the word line is asserted.

Key properties:

- No refresh. The cross-coupled inverters actively hold the value as long as power is applied.

- Fast. Sub-nanosecond access in modern process nodes.

- Bulky. Six transistors per bit means low density.

- Expensive. Used where speed matters most: CPU L1/L2/L3 caches and register files.

DigiSim’s 4-bit register is conceptually an SRAM word — it uses D flip-flops instead of cross-coupled inverters, but the role is identical.

DRAM — Dynamic RAM

A DRAM cell is one transistor and one capacitor. The capacitor holds the bit (charged = 1, discharged = 0) and the transistor connects it to the bit line when the word line fires.

Key properties:

- Refresh required. The capacitor leaks charge over milliseconds. The chip’s controller reads every row periodically and writes it back to restore the charge.

- Slow. Access takes 10–50 ns due to row activation, sense amplification, and precharge.

- Dense. One transistor per bit gives roughly 4–6× the density of SRAM at the same process node.

- Cheap. Used for main system memory: the gigabytes on a DIMM.

The destructive read is also notable: reading a DRAM cell discharges the capacitor, so the sense amplifier must rewrite it as part of every read cycle.

ROM Types

ROM is not one technology either. The distinguishing feature is how and when the bits get programmed, which determines write endurance.

| Type | When written | Erasable? | Endurance |

|---|---|---|---|

| Mask ROM | At fab time, by mask layer | No | 0 (read-only) |

| PROM | Once, by user (blowing fuses) | No | 1 |

| EPROM | Multiple times, with UV erase | Yes (full chip, UV light, ~30 min) | ~1000 cycles |

| EEPROM | In-circuit, byte addressable | Yes (electrically) | ~10 000–100 000 cycles |

| Flash | In-circuit, block addressable | Yes (electrically, by block) | ~10 000–100 000 cycles |

Mask ROM

The bits are wired into the chip itself by the metallization mask during fabrication. A “1” cell has a connection from word line to bit line; a “0” cell does not. There is no programming mechanism — you order the chip with the desired bit pattern and the foundry burns it in.

Used historically for video-game cartridges, character ROMs in CRT terminals, and the hard-coded instruction sets of pocket calculators.

PROM (Programmable ROM)

Each cell contains a fuse. The factory ships every fuse intact (all 1s). The user blows selected fuses with a programmer to write 0s. Once blown, the fuse is gone — you cannot rewrite.

EPROM (Erasable PROM)

The cell is a floating-gate transistor whose threshold voltage can be raised by injecting electrons. The electrons stay there indefinitely unless removed by ultraviolet light through the chip’s quartz window. Programming is electrical; erase requires removing the chip and shining UV on it for around half an hour.

EEPROM (Electrically Erasable PROM)

Like EPROM but with a thinner oxide that allows tunneling. Erase is electrical and can target individual bytes. Used for small configuration storage, BIOS settings, and microcontroller calibration data.

Flash

A specialized EEPROM optimized for density. Erase happens in blocks — typically 4 KB or 64 KB at a time — which makes it cheaper per bit. Reads are byte-addressable; writes require erasing the entire block first. Modern SSDs, USB drives, and microcontroller program memory are all Flash.

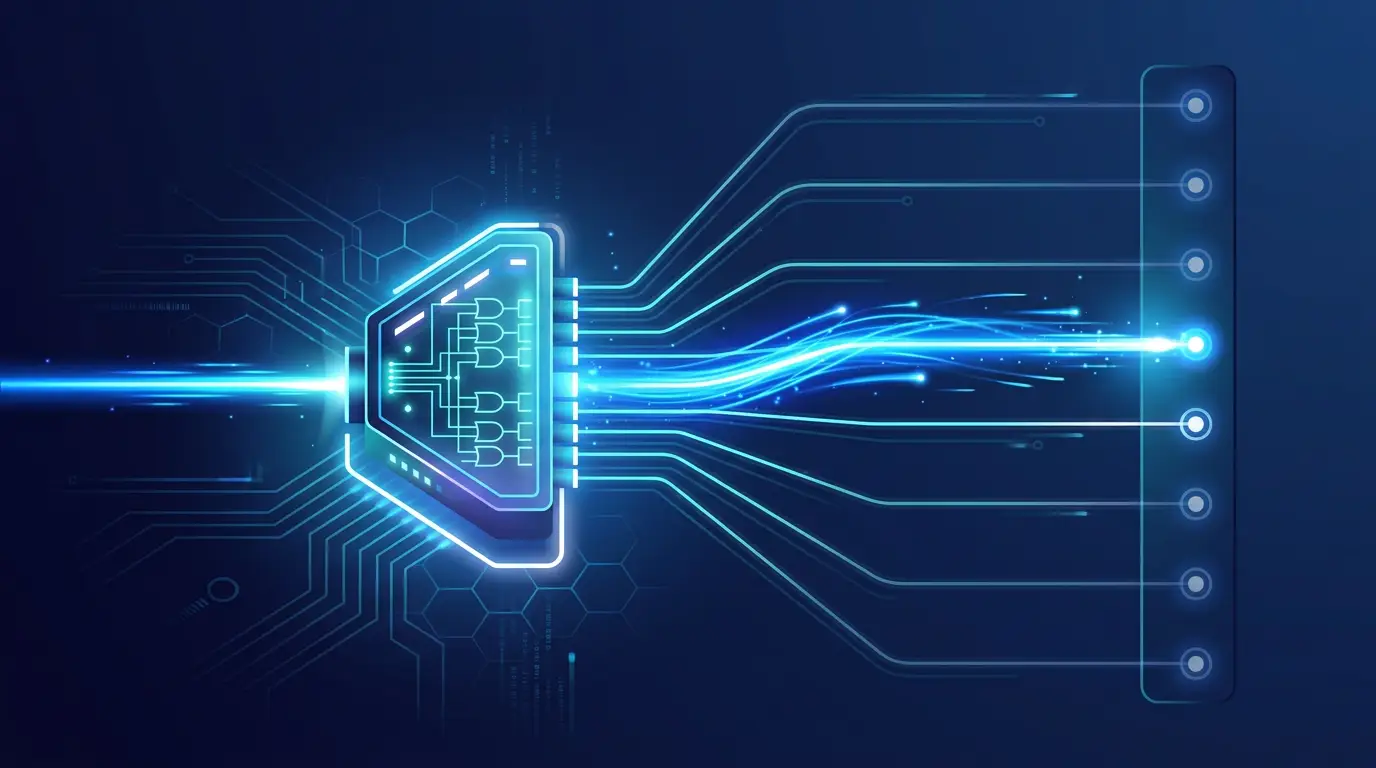

How a Memory Chip Is Read

Both RAM and ROM share the same external interface and the same internal addressing scheme. A memory chip with words of bits each has:

- An -bit address bus input.

- A -bit data bus (input + output, or two separate buses).

- A chip select (CS), read enable (RE / OE), and — for RAM — write enable (WE).

Inside, the structure is:

address[n-1:0]

│

▼

┌──────────────────┐

│ Address decoder │ ── selects one word line

│ (1 of 2^n) │ out of 2^n

└──────────────────┘

│

▼

┌───────┬───────┬───── ... ─┬───────┐

│ word0 │ word1 │ │ wordN │ ←── one word line per row

├───────┼───────┼─── ... ───┼───────┤

│ │ │ │ │

│ cells, each on a bit line per column

│ │ │ │ │

└───────┴───────┴───── ... ─┴───────┘

│ │ │ │

▼ ▼ ▼ ▼

bit_line[0..w-1] ── sense amps ── data_busThe Address Decoder

The address decoder is exactly the decoder component covered in Decoders and Encoders Driving a 7-Segment Display. For an -bit address it produces output lines, exactly one of which is high. Each output line is a word line — it activates one row of cells.

A 4-bit address gives 16 word lines. An 8-bit address gives 256. Real memory chips have row addresses of 13–16 bits, decoded by a tree of smaller decoders rather than one giant fan-out.

Word Lines and Bit Lines

When the decoder asserts word line , every cell in row connects to its column’s bit line. The cells in unselected rows leave their bit lines floating.

For a read:

- The selected row’s cells drive their stored values onto the bit lines.

- Sense amplifiers detect the small voltage differences and convert them to clean digital levels.

- Output drivers place the result on the data bus when chip select and read enable are both asserted.

For a write (RAM only):

- The selected row connects its cells to the bit lines.

- Write drivers force the bit lines to the desired values.

- The cells latch the values when the write strobe deasserts.

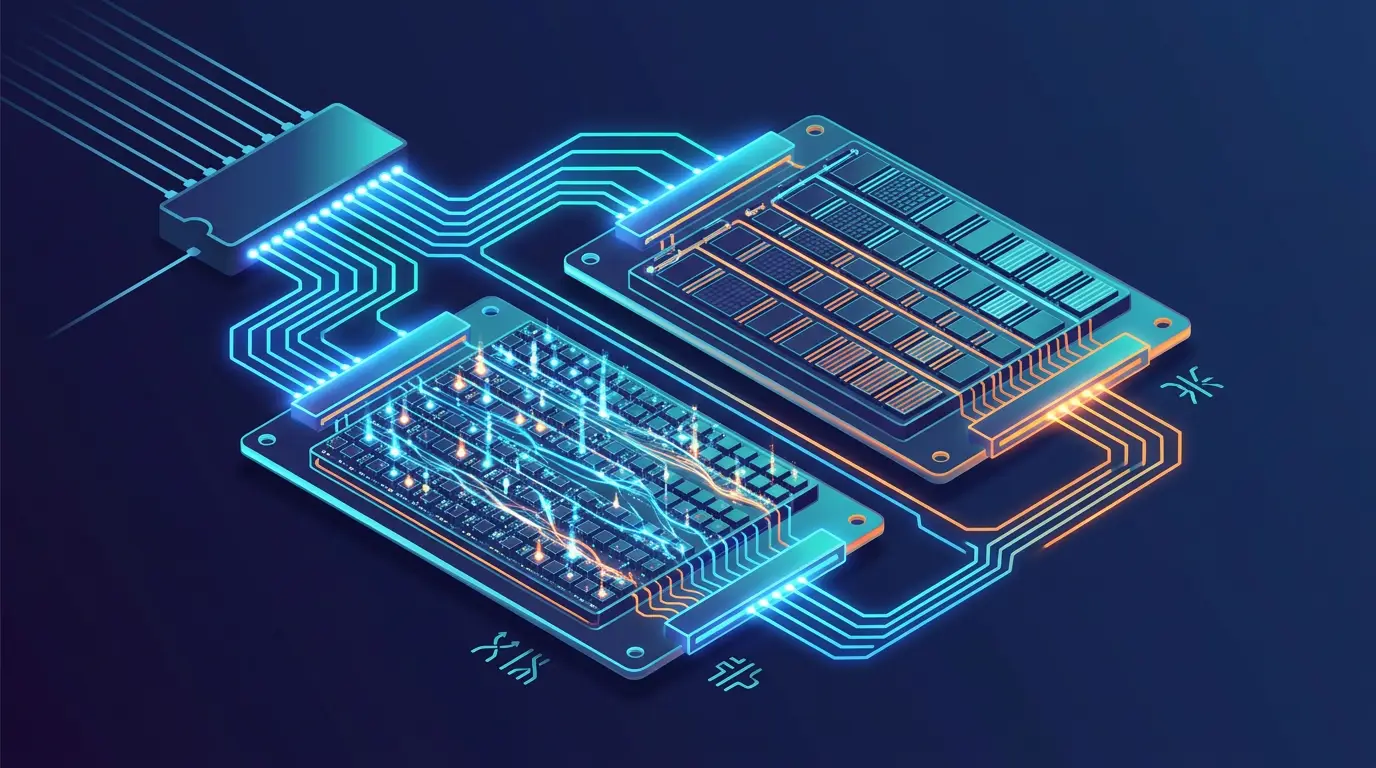

Worked Example: A 16-Byte RAM

Build a memory with 16 words × 8 bits. The address bus is 4 bits (since ); the data bus is 8 bits.

Component count:

- Decoder: 4-to-16, exactly the structure scaled up from the 3-to-8 decoder in DigiSim.

- Cells: 16 × 8 = 128 single-bit cells. In a register-file-style RAM these are D flip-flops; in a real DRAM they would be 1T1C cells.

- Bit lines: 8 columns, each tying every cell in that column.

- Read multiplexer: A bank of 16-to-1 muxes (one per bit) gates the selected word’s cells onto the data bus. The same select signal that drives the write decoder drives this mux.

- Write demux: Implicit — the decoder’s word-line output combined with the chip’s write-enable acts as the per-row write strobe.

Address breakdown:

| Address (binary) | Address (hex) | Word line activated |

|---|---|---|

| 0000 | 0x0 | WL0 |

| 0001 | 0x1 | WL1 |

| 0010 | 0x2 | WL2 |

| ⋮ | ⋮ | ⋮ |

| 1111 | 0xF | WL15 |

Reading address 0x5: decoder activates WL5; the eight cells of word 5 drive their stored bits onto the bit lines; the data bus shows those eight bits.

Writing 0xA3 to address 0x9: decoder activates WL9; the write drivers force the bit lines to 1010 0011; on the falling edge of WE, word 9’s cells latch the new values.

The simulator’s Basic RAM Memory System and RAM with Address Control templates implement exactly this structure.

For ROM, the ROM Memory Demonstration Circuit replaces the writable cells with hard-wired connections — the cell is “1” if there is a wire from the word line to the bit line, “0” if there is not.

Endianness — A Brief Note

Once you have a 16-byte RAM, you immediately face the question of how to store a multi-byte value across multiple addresses. A 16-bit value 0x1234 could be laid out two ways at addresses 0x0 and 0x1:

| Endianness | Address 0x0 | Address 0x1 |

|---|---|---|

| Big-endian | 0x12 | 0x34 |

| Little-endian | 0x34 | 0x12 |

x86 and ARM (in default mode) are little-endian: the least significant byte goes at the lowest address. Network protocols and historical mainframes are big-endian. The choice is invisible to single-byte accesses but critical for multi-byte loads, stores, and any cross-platform binary file format. Endianness is a property of the CPU’s load/store unit, not the memory itself — the RAM cells store bytes; the CPU decides how to interpret a sequence of them.

Common Pitfalls

- Conflating SRAM speed with DRAM cost. Caches use SRAM because they need single-cycle access. Main memory uses DRAM because gigabytes of SRAM would be unaffordable. Choosing the wrong technology for the role is the most expensive error in memory hierarchy design.

- Ignoring DRAM refresh in simulation. A DRAM model that never decays gives misleading worst-case latencies. For pedagogy this is fine; for real performance modeling, refresh has to be in the model.

- Forgetting Flash’s erase-before-write rule. Code that treats Flash like RAM and writes single bytes will fail or wear out the device quickly. Flash file systems batch writes into block-sized erase-and-rewrite operations.

- Address decoder fanout. A decoder for a large address space cannot be one giant gate; it must be a tree. Beginners drawing 8-to-256 decoders as a single layer of 256 8-input AND gates run into delay and routing issues.

Build It in DigiSim

Open the three memory templates side by side:

- Basic RAM Memory System — minimum viable RAM with address, data-in, data-out, and write-enable.

- RAM with Address Control — adds the address-counter wiring needed to step through memory under clock control.

- ROM Memory Demonstration Circuit — same external interface, no write port, contents fixed at design time.

Step through each template’s address inputs and watch the decoder’s word-line output toggle as the address increments. That single wire-flip is the entire mechanism that distinguishes “address 0x5” from “address 0x6” in every memory chip ever made.

For the broader story of how memory ties into CPU execution, the Fetch-Decode-Execute case study walks through how a fetched word becomes an instruction in the 4-bit register-based register file. From there, every program is just patterns of reads and writes against the structures laid out above.