Static vs Dynamic Hazards in Combinational Logic

TL;DR: A static hazard is a momentary glitch on an output that should have stayed constant; a dynamic hazard is multiple transitions on an output that should have changed exactly once. Both are caused by races between paths of unequal propagation delay. The textbook fix for static hazards is to add a redundant prime-implicant cover term that bridges adjacent K-map groups — the truth table is unchanged, but the glitch is gone.

A two-level AND-OR network can be functionally correct on paper and still produce visible glitches in silicon. The truth table says the output stays at 1 for a particular input transition, the simulator with zero gate delay agrees, and yet a real circuit drops briefly to 0 and bounces back. That brief drop is a hazard, and once you know how to spot one on a Karnaugh map you can also remove it with a single extra gate.

What is a Hazard?

A hazard is the possibility of an unwanted output transition caused by unequal propagation delays through different paths of a combinational circuit. The hazard is a property of the network, not of any particular input pattern; whether it produces a visible glitch depends on which signal arrives first.

Hazards split into two main families.

A static hazard occurs when the output is supposed to stay constant — either at 1 or at 0 — across an input change, but it momentarily flips to the opposite value before settling.

A dynamic hazard occurs when the output is supposed to make exactly one transition (0 to 1 or 1 to 0), but instead transitions multiple times before settling. Dynamic hazards typically appear in three-level or deeper networks where multiple internal races compound.

The root cause is always the same: a signal reaches the final gate via two paths with different delays, and during the brief window when those paths disagree the gate produces a wrong output.

Static-1 and Static-0 Hazards

A static-1 hazard lives on the AND-OR side of the world. The output is supposed to remain at 1 across some input transition. Internally, two product terms each cover the input pattern, but the input transition switches the responsibility from one product term to the other. If the deactivating term turns off slightly before the activating term turns on, the OR gate sees neither term active for a few nanoseconds and the output dips to 0.

A static-0 hazard is the dual on the OR-AND side. The output is supposed to remain at 0, two sum terms each force it to 0 for the relevant input pattern, and a transition hands responsibility from one sum term to the other. If one releases before the other engages, the AND of the two sums briefly sees both at 1 and the output spikes to 1.

Both flavours come from the same underlying race. By convention the static-1 form is the one most often discussed because most synthesis flows produce sum-of-products networks; see the SOP guide for the form a static-1 hazard lives in. The dual analysis applies to product-of-sums forms without modification.

A Concrete Static-1 Hazard

Consider the function:

This is a classic teaching example because the hazard is easy to construct by hand. It is the function that selects when and when — equivalent to a 2-to-1 multiplexer. Build it from gates: one inverter for , two AND gates, and one OR gate.

Hold and . The output should be 1 regardless of , because:

- When : , so .

- When : , so .

Now toggle from 1 to 0 with and pinned at 1.

| Time | A | F | |||

|---|---|---|---|---|---|

| (steady) | 1 | 0 | 1 | 0 | 1 |

| (A falls) | 0 | 0 | 0 | 0 | 0 |

| (NOT settles) | 0 | 1 | 0 | 1 | 1 |

At the input has already gone low, so is 0. But the inverter has not yet produced a 1 on , so is also still 0. For one inverter delay, both product terms are 0 and the OR gate output drops to 0. That brief dip is the static-1 hazard. It vanishes the moment the inverter catches up, but if the dip happens to be sampled by a downstream flip-flop the wrong value gets latched.

Spotting the Hazard on a Karnaugh Map

Plot the function on a K-map for , , :

BC

00 01 11 10

+----+----+----+----+

A=0| 0 | 1 | 1 | 0 |

+----+----+----+----+

A=1| 0 | 0 | 1 | 1 |

+----+----+----+----+Two prime implicants cover the 1-cells: wraps the top row positions where , and wraps the right two cells where . The minimal SOP picks both — exactly the function above.

The two groups touch at cell on one side and on the other. Toggle between those two cells and you cross from one group to the other. A static-1 hazard exists exactly when an input transition causes the active group to change with no group covering both cells. That is the K-map signature: two adjacent 1-cells that do not share a single covering group.

The visualisation is easy on a Karnaugh map: if you can move between two adjacent 1-cells without staying inside a single oval, the network has a static hazard along that transition.

The Fix: Redundant Cover

Hazard removal works by adding a redundant prime implicant whose group spans the two cells the input transition crosses. In the example, both 1-cells at — top-right and bottom-right — fall inside the single group . Add it:

The truth table is unchanged. Boolean algebra confirms the new term is consensus — see the consensus theorem in any Boolean algebra treatment — so the function is identical. But during the transition with , the new product is 1 the whole time. The OR gate now has at least one input pinned at 1 throughout the race, and the dip cannot occur.

BC

00 01 11 10

+----+----+----+----+

A=0| 0 | 1 | [1]| 0 |

+----+----+----+----+

A=1| 0 | 0 | [1]| 1 |

+----+----+----+----+The bracketed cells are the new group. It bridges the two original groups exactly across the hazardous transition.

The general procedure is:

- List every pair of adjacent 1-cells on the K-map.

- For each pair, check whether some prime implicant in your minimal cover contains both cells.

- If not, add a prime implicant that does. It is always an existing prime implicant of the function — never a new one synthesized from scratch.

This is sometimes called hazard cover or consensus cover. It is the simplest static-hazard removal method and works for any two-level AND-OR network with single-variable input changes.

Dynamic Hazards

Static hazards are about a single race producing a single glitch on a constant signal. Dynamic hazards are about multiple races producing multiple transitions on a signal that was supposed to change exactly once.

Consider a three-level network of the form:

A change on propagates through both and . Each of those signals then meets the third term inside an AND. If the two paths through the network see different delay chains, the AND can see the input change, then revert, then change again as the slower path catches up. The output makes 0-1-0-1 transitions instead of one clean 0-1.

Dynamic hazards require at least three levels of logic with reconvergent paths. They are rare in two-level minimal SOP networks because there is no second logic layer to compound a race. They appear in:

- Multi-level networks produced by technology mapping into NAND/NOR cells.

- Networks with shared sub-expressions where the shared signal feeds multiple downstream gates with different delays.

- Hand-optimised circuits that trade gate count for depth.

The cure is harder than for static hazards. There is no single redundant cover term that fixes a dynamic hazard in general, because multiple races can interact. Practical mitigations include:

- Delay balancing. Insert buffers on the faster path so all reconvergent paths arrive together.

- Resynthesis. Re-derive the network from the truth table directly to a two-level form, eliminating the multi-level structure that hosts the hazard.

- Careful technology mapping. Modern synthesis tools recognise hazard-prone topologies and break them up automatically.

- Latching the output. If the output feeds a clocked flip-flop and the clock period is longer than all settling races combined, the multiple transitions are invisible at the next clock edge. This is the standard escape hatch in synchronous design — see propagation delay for the timing budget that makes it work.

Why You Should Care About Hazards

In a fully synchronous design where every combinational output feeds a flip-flop, hazards are usually harmless: the flip-flop only samples after the network has settled, so a glitch that ends inside the clock period never escapes. The clock period absorbs the noise.

Three classes of design break that assumption.

Asynchronous logic. State elements are level-sensitive or self-timed, so any glitch on a feedback path can be latched as a real state change. Hazards in async logic become essential hazards — single-input-change glitches that drive the circuit into the wrong state. The textbook example is the SR latch, which in textbook form is an asynchronous element built from cross-coupled NORs and is sensitive to glitches on either input.

Edge-detect and trigger circuits. A combinational network that drives the input to a setup/hold-sensitive flip-flop near the clock edge can latch a glitch as a real value. Modern flip-flops are spec’d against this, but it is a real failure mode in tight timing.

Outputs that drive the outside world. A combinational signal that toggles a piece of physical hardware — a relay, a chip-select, an interrupt line — must be glitch-free even if the internal logic is synchronous. Glitches manifest as spurious chip selects, double-counted interrupts, or chattering relays.

In all three cases, the hazard cover term is cheap insurance. One extra AND gate per K-map seam is a much smaller price than chasing a metastable counter.

Essential Hazards in Asynchronous Logic

A brief mention is warranted for completeness. Essential hazards are not caused by uneven gate delay — they are caused by uneven wire delay distributing a single input signal to multiple feedback points in an asynchronous sequential circuit. The Huffman model of async design assumes the input change reaches all parts of the circuit simultaneously; when it does not, the circuit can transition through an unintended state on its way to the correct one.

Essential hazards cannot be removed by adding redundant logic. They have to be designed out by:

- Buffering the input distribution carefully.

- Using delay elements to ensure the feedback loop settles before the input reaches the next stage.

- Switching to a fundamentally synchronous design.

This is one of the deeper reasons modern digital design defaults to fully synchronous timing: clocks make essential hazards into a non-issue.

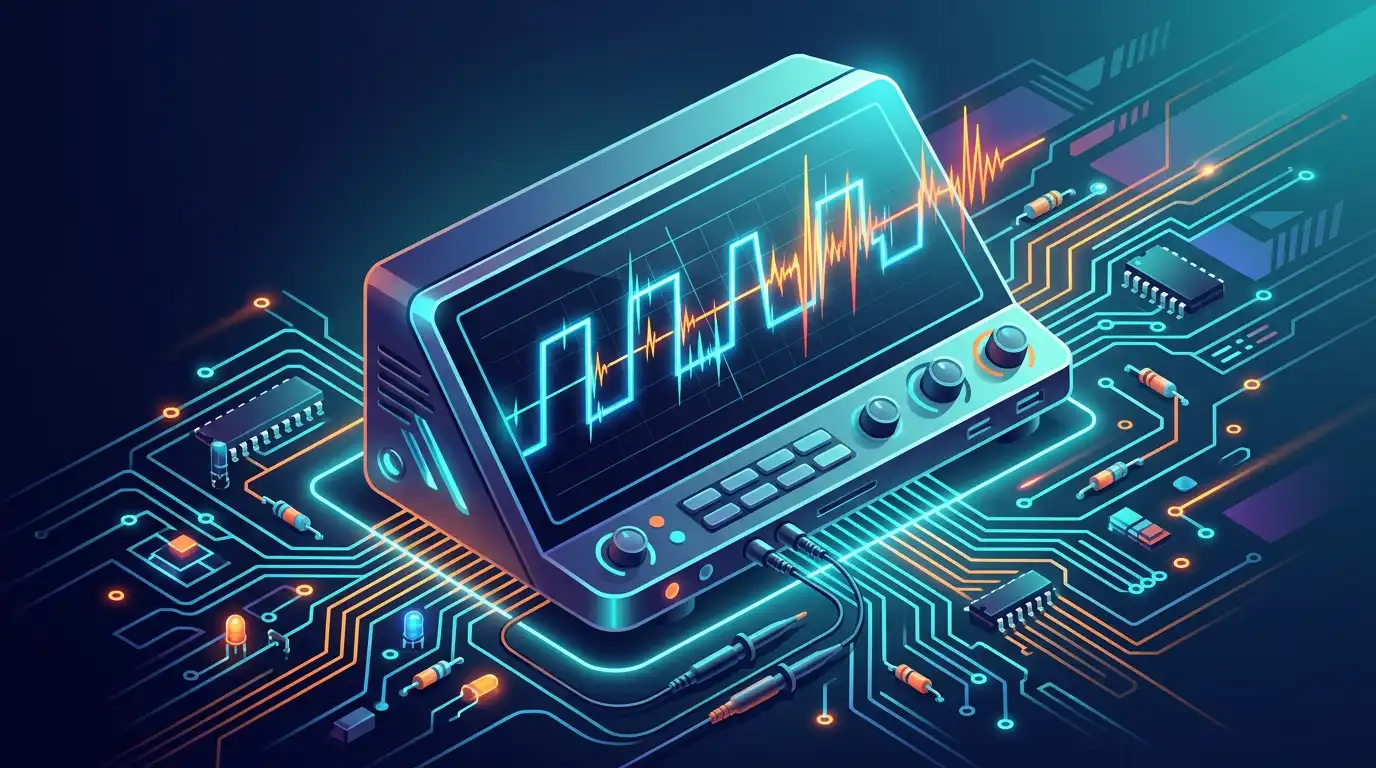

Building a Hazard in the Simulator

You can reproduce the example yourself.

- Place an INPUT_SWITCH for each of , , . Set and both HIGH.

- Build using one NOT gate, two AND gates, and one OR gate.

- Insert a delay buffer in the path between and the inverter so takes longer to update than . A few buffer cells in series will do.

- Toggle low. Watch the output on a scope-style display. You should see a clean 1 most of the time, with a brief dip to 0 each time goes low.

- Add a third AND gate for and OR it into the output. The dip disappears.

This is the same dataflow that synthesis tools see when they detect a hazard pattern: a K-map seam crossed by a single input change, with no covering group.

What’s Next

Hazards are about static, combinational glitches. The dual problem on the sequential side — how a non-zero clock arrival difference moves a flip-flop’s effective sampling window — is covered in the upcoming clock skew and its effect on timing post. And if you want to take K-map optimization further, don’t-care conditions in Karnaugh maps shows how undefined input combinations can be exploited as free hazard cover.

Open the SR-latch demonstration template to see how a single hazard glitch can propagate into permanent state corruption — the most striking demonstration of why hazard cover matters.